In self-driving cars, reliable Convolutional Neuronal Networks (CNN) are essential. With their help, artificial intelligence (AI) is supposed to automatically recognize other traffic participants. However, the more autonomously the car drives, the greater the demands on the safety of the algorithms. In order to protect the human life, a deep understanding of the inner-processes of these neural networks is necessary. However, a CNN operates as a black box per se, so the complex decision paths are difficult to understand and are making it hard to assess any safety risks. These challenges can be solved by appropriate visualization. For this purpose, ARRK Engineering GmbH has developed a validation tool for analyzing the decision-making processes. Thanks to its interactive visualization, the program allows a deeper insight into each layer of a CNN. All weights of the neurons can be manually adjusted to see their impact on the final object recognition. Furthermore, the influences of different confounding factors as well as certain training methods can be easily detected and thus the CNN can be optimized in a later step.

The current methods for analyzing and validating neural networks originate primarily from scientific research. However, these methods rarely take into account industry-standard functional safety requirements. For example, ISO26262 and ISO/PAS21448 require automotive manufacturers to have a much more comprehensive knowledge of the concrete functioning and decision paths of neural networks than has been discussed in scientific discourse to date. “Therefore, in order to better understand the issues in object recognition by CNNs, we have developed software that enables standardized validation,” reports Václav Diviš, Senior Engineer ADAS & Autonomous Driving at ARRK Engineering. “During developing this visualization tool, we laid the foundation of a new evaluation method: the so-called Neurons’ Criticality Analysis (NCA).” Based on this principle, a reliable statement can be made about how important or harmful individual neurons are for correct object recognition.

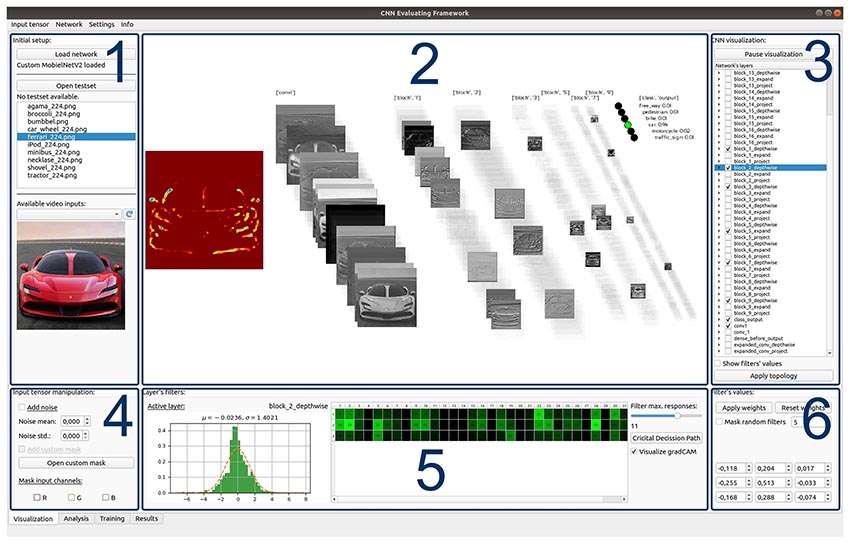

Interactive visualization of decision-making processes

The interaction of the individual neurons in the numerous layers of a CNN is extremely complex. Each layer and each neuron perform special tasks in the recognition of an object – for example, a rough sorting according to shapes or the filtering of certain colors. However, each step contributes in different manner to the success of correct object recognition and, can in some cases even worsen the result. This complexity leads to the fact that the importance of individual neurons for the decision has been inscrutable so far. Therefore, ARRK Engineering has developed an interactive and user-friendly graphical interface to visualize these paths. “In this way, the decision-making process of a CNN can be visually represented,” Diviš said. “In addition, the relevance of certain neurons to the final decision can be increased, decreased or even eliminated. In real time, the tool immediately determines the impact of these changed parameters after each adjustment has been made. Thus, the importance of certain neurons and their task can be more easily identified and understood. “The streaming of the data can be paused at any time for stress-free and convenient analysis. During this stop, the individual elements can then be examined in more detail via intuitive operation.

For this visual baking, the experts at ARRK Engineering chose the cross-platform programming interface OpenGL to ensure the greatest possible flexibility. This means that the software can be used universally on any device – be it PC, cell phone or tablet. “We also placed particular emphasis on optimizing the calculation and the subsequent graphical display,” explains Diviš. “Therefore, frame per seconds (FPS) in particular were checked in our final benchmark tests. Within this framework, we were able to determine that even when processing a video and using a webcam, the frame rate was stable at around 5 FPS – even when visualizing 90 percent of all possible feature maps, which is roughly equivalent to 10,000 textures. “Despite the large amount of graphical information and data, no FPS fluctuation are thus to be expected.

Analysis of the critical and anti-critical neurons

For teaching the CNN within ARRK Engineering’s visualization tool, the deep learning APIs TensorFlow and Keras are used as a basis, serving as a flexible implementation of all classes and functions in Python. Other external libraries can also be easily connected. Once the neural network has been sufficiently trained, the analysis of critical and anti-critical neurons can begin – the Neurons’ Criticality Analysis. “For this, we offer modification such as addition of random noise ,the removal of color filters, and the masking of certain user-defined areas,” Diviš explains. “Changing these values directly shows how much individual neurons influence the decision in the end. It also reveals which parts of the neural network may be interfering with the overall recognition process.”

With the help of a sophisticated algorithm, the criticality of each individual neuron is automatically calculated. If the value of a neuron is above a certain level, it influences the correct image recognition. The critical threshold can be adjusted as desired. “The final definition of this threshold depends on numerous factors – for example, functional safety requirements, but ethical aspects also play a role here and should not be underestimated,” Diviš adds. “Depending on what is desired, this value can be adjusted beyond that, ensuring the greatest possible flexibility of the tool. “In this way, the tool is not fixed to the current requirements and standards, but can be adapted to the new requirements at any time in the event of changes in safety regulations.

Increased safety through deeper understanding of CNN’s

With the visualization tool, ARRK Engineering enables a graphical validation of neural networks. Thanks to the software in conjunction with the NCA, further steps can now reduce safety risks in autonomous driving through additional mechanisms for plausibility checks. “Our goal is to minimize or better partition the number of critical neurons so that we can rely on robust image recognition of the AI,” Diviš sums up. “So we’re already looking forward to feedback from the field, as this will allow us to further optimize the tool.” At the moment, for example, ARRK Engineering is already working on adding object detection to the classification. Here, however, there is still the challenge that, in addition to the classification, the coordinates of the objects must also be visualized in a clear manner.

(For more information, visit: www.arrk-engineering.com)

What is a Convolutional Neural Network (CNN)?

A CNN is a neural network that can be used to make independent object recognition decisions. Analogous to the human brain, it uses neurons that are specialized for certain tasks – for example, edge smoothing or color recognition. As a result, several layers are used to filter and evaluate certain aspects of an image. At the end of the entire process, the CNN calculates the probability of it being a pedestrian, cyclist or vehicle, for example.

What are ISO 26262 and ISO/PAS 21448?

ISO 26262 is a standard for the automotive industry relating to functional safety. It divides the measures for the potential risk of vehicle functions into so-called “Automotive Safety Integrity Levels” – ASIL for short. This 5-level scale (QM, A, B, C, D) defines different process requirements for the development of the product: At ASIL QM, for example, “usual quality assurance” by means of a deployed development process is sufficient, while additional risk reduction measures must already be taken from ASIL A onwards with the aid of monitoring diagnostics and plausibility functions. ASIL D describes the product with the highest risk potential and thus with the highest safety requirements.

ISO/PAS 21448 contains supplementary guidelines for the design, verification and validation measures for autonomous driving that are required to achieve the so-called Safety of the Intended Functionality (SOTIF). The focus here is particularly on the correct perception of complex sensors and the associated process algorithms.

“In the end, our goal is to minimize or better partition the number of critical neurons so that we can rely on robust image recognition from the AI,” reports Václav Diviš, Senior Engineer ADAS & Autonomous Driving at ARRK Engineering. “We are therefore already looking forward to feedback from the field, as this will allow us to further optimize the tool.”

ARRK Engineering is part of the international ARRK group of companies and specializes in product development. With the help of our competencies in Electronics & Software, CAE, Materials, Acoustics, Composites, Body & High-Voltage Storage, Powertrain, Chassis, Interior & Exterior, Optical Systems, Passive Safety and Thermal Management, we develop products for our customers holistically and independently as a long-standing strategic development partner. Within the ARRK group of companies, we implement product developments from virtual development to prototypes and small series production. The locations of the globally active ARRK Engineering Division are in Germany, Romania, UK, Japan and China. The company employs more than 1,250 people. For more information, visit www.arrk-engineering.com.

ARRK ENGINEERING GMBH

Frankfurter Ring 160, 80807 Munich

Tel.: 089 31857-0, Fax: 089 31857-111

E-mail: info@arrk-engineering.com

Internet: www.arrk-engineering.com